20 Post-Purchase Survey Questions That Customers Actually Answer

Most post-purchase surveys are a waste of time, yours and your customer's.

They're too long. They arrive too late. And they're built around what the marketing team wants to hear, not what the customer wants to say.

The result? A handful of scattered responses, a dashboard nobody opens, and a false sense that you're listening.

But when surveys are done right, they become one of the fastest feedback loops a business has. They catch problems before they spiral. They reveal why people actually buy. They tell you things your analytics never will.

This guide is for anyone running an online store, managing customer experience, or trying to make smarter decisions with real customer data, without the fluff.

What Is a Post-Purchase Survey, Really?

It's a short set of questions sent to customers right after they buy.

That's it.

Not a full satisfaction program. Not a replacement for NPS. Not a 12-question research form disguised as "quick feedback."

It's a snapshot of the customer experience at the exact moment it matters most, right after the purchase decision. The customer still remembers what convinced them, what confused them, and what almost made them leave.

That window closes fast. Use it.

Why It's Worth Doing

It beats last-click attribution. When someone answers "How did you hear about us?" right after buying, that answer is often more accurate than your analytics dashboard. You might discover a podcast, a Reddit thread, or a friend recommendation is doing more work than any paid ad.

It catches friction quietly. Most customers won't open a support ticket when checkout is confusing. They just won't come back. A short survey gives them an easy way to tell you what went wrong.

It shows you who's actually buying. First-time buyers think differently than repeat customers. Gift buyers behave differently than business buyers. One average hides all of that. Segment your responses and the real story starts to emerge.

It gives you the why behind the what. A rating tells you something went wrong. An open-text answer tells you why. The real value almost always lives in that one sentence after the score.

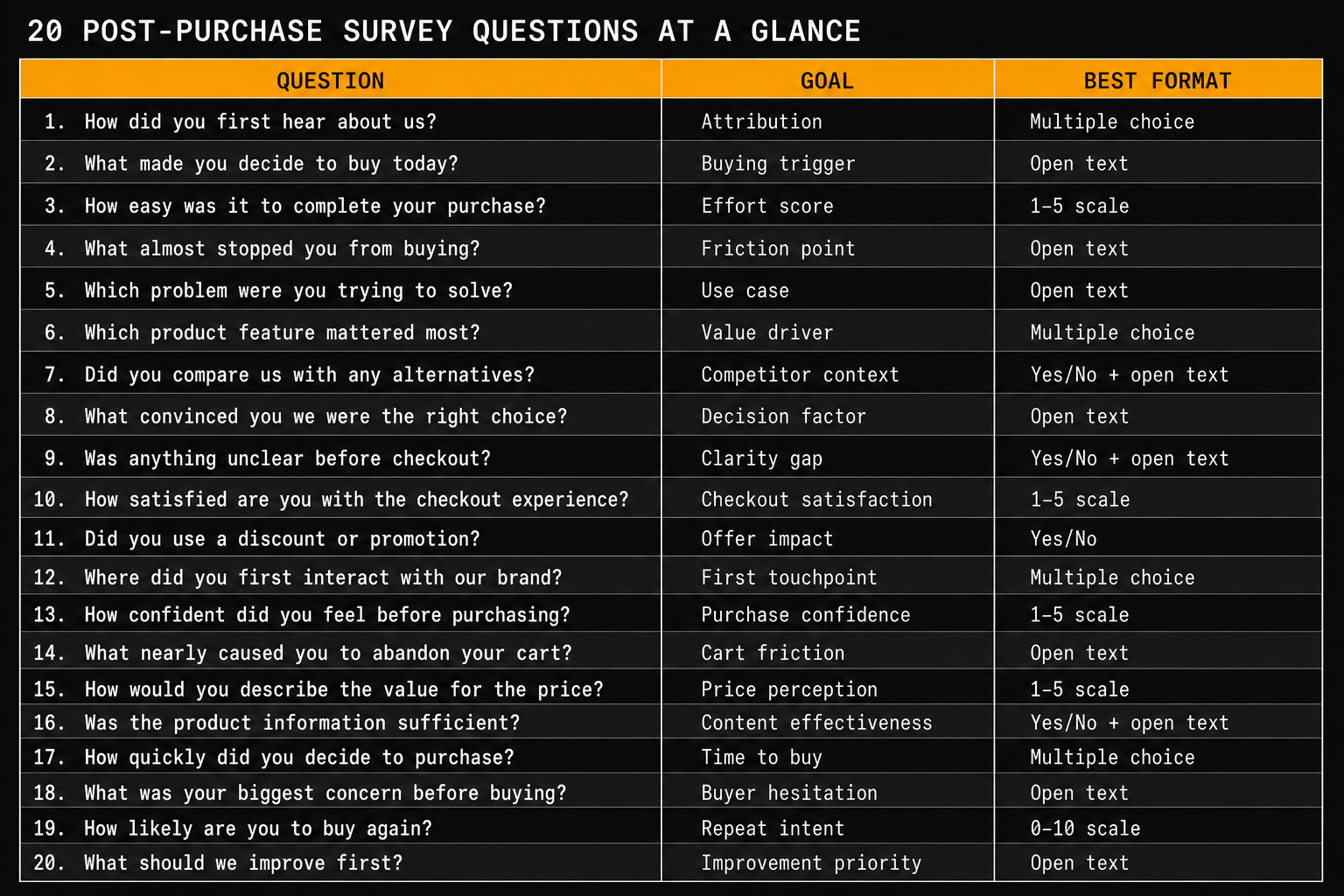

20 Questions Worth Asking

Don't use all of these. That's how good surveys become bad ones. Pick two based on what you're trying to learn right now.

About the Purchase Experience

- How satisfied are you with your purchase experience today? A simple 1 to 5 rating. Easy to answer, easy to track.

- How easy was it to complete your purchase? If buying feels hard, coming back feels harder.

- Did everything go as expected during checkout? Yes or no, then an optional "What went wrong?" One honest answer here can outperform a month of aggregate data.

About Discovery and Attribution

- How did you first hear about us? Give clear options and always include "Other." This question alone often justifies the whole survey program.

- What made you decide to buy today? One of the best open-text questions you can ask. It reveals urgency triggers, trust signals, and the real reason someone moved from interest to purchase.

- Did you consider other brands before choosing us? Yes or no. If yes, which ones? Useful for positioning, copy, and understanding where you're winning.

About Product Fit

- Did the product match what you expected from the description? Scale from "not at all" to "exactly." If many customers say "not quite," you may have a product page problem, not a product problem.

- What was the main reason you chose this product? Offer options like price, reviews, features, brand trust, or recommendation. The answers often surprise teams.

- Is there anything you wish you'd known before buying? A high-value question for reducing returns and improving product pages.

About Shipping and Fulfillment

- How satisfied are you with the shipping options available? Ask this right after checkout, not after delivery. You're measuring the perception, not the outcome.

- Did you receive accurate delivery time estimates? Yes, No, or Not sure yet. Useful if "where is my order?" tickets are rising.

About Loyalty and Future Intent

- How likely are you to purchase from us again? A 0 to 10 scale gives you an early signal for retention.

- Would you recommend us to a friend? Yes, No, or Maybe. Simpler than NPS and still meaningful right after purchase.

- What would make you more likely to buy again? Ask this when someone gives a neutral or low score. The answers tend to be specific and actionable.

Open Feedback

- Is there anything we could have done better? Many brands avoid this one. That's exactly why it matters.

- What did you like most about this experience? Don't only collect complaints. Positive feedback tells you what to protect and what to repeat.

- Anything else you'd like to share? This catch-all sometimes returns the most useful feedback in the entire survey.

Segmentation and Research

- What best describes you as a shopper? First-time buyer, returning customer, buying as a gift, or business purchase. Once you segment this way, everything else makes more sense.

- How often do you shop in this category? Tells you if the person is an expert or a first-timer, which changes how you read their expectations.

- What other products would you like to see from us? Simple, cheap, and surprisingly useful product research.

How to Run These Well

Keep it to two questions. A post-purchase survey should not feel like homework. Two questions is the sweet spot. Use branching logic if you need depth, but don't make every customer answer everything.

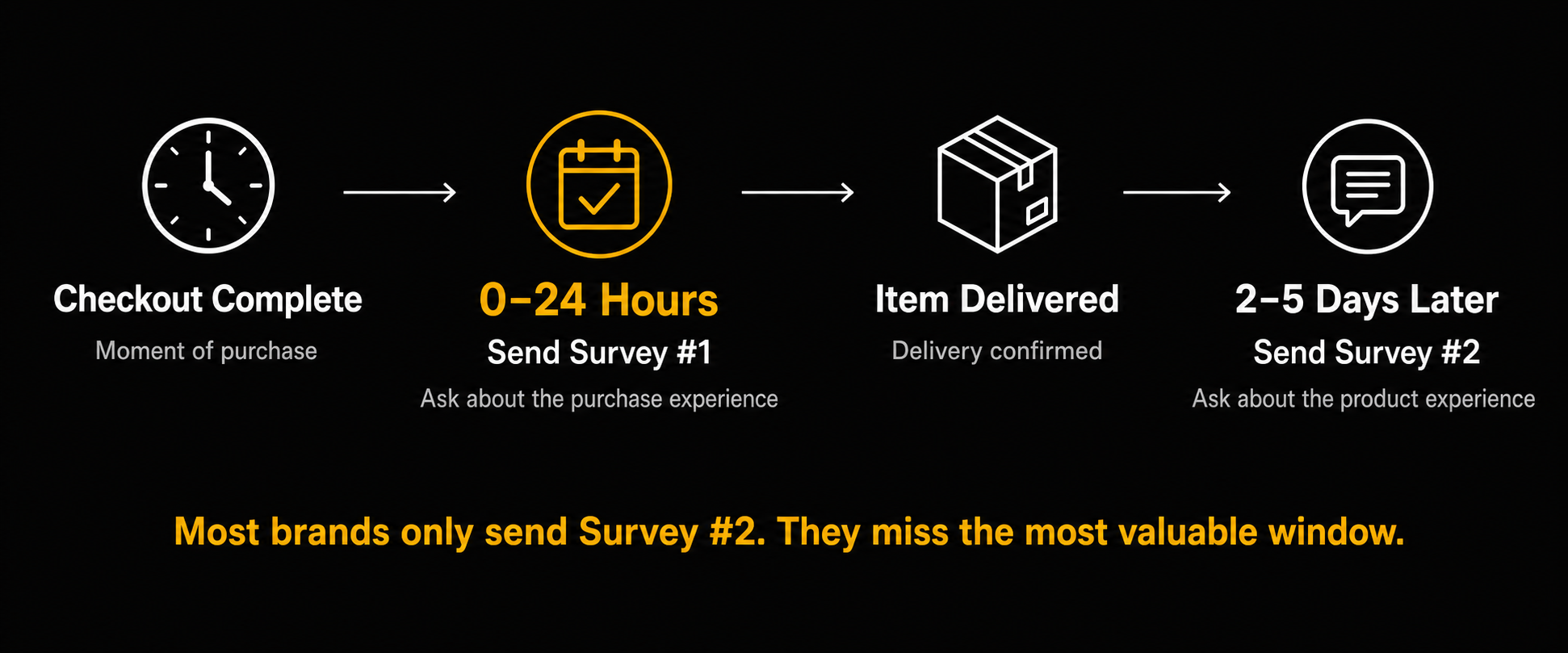

Time it right. For digital products, send within 24 hours. For physical products, consider two separate touchpoints. One right after checkout asking about the buying experience, and one after delivery asking about the product. Most brands only do the second one and miss the most valuable window completely.

Write like a person. "Rate your satisfaction with your transactional experience" will get ignored. "How did checkout go?" will get answered. The difference is just tone.

Read the open text. Scores give you a number. Open text gives you the story behind the number. If you skip it, you're reading half the data.

Follow up on low scores. A bad score is a customer giving you one more chance. A personal reply asking what happened does more for loyalty than another automated email.

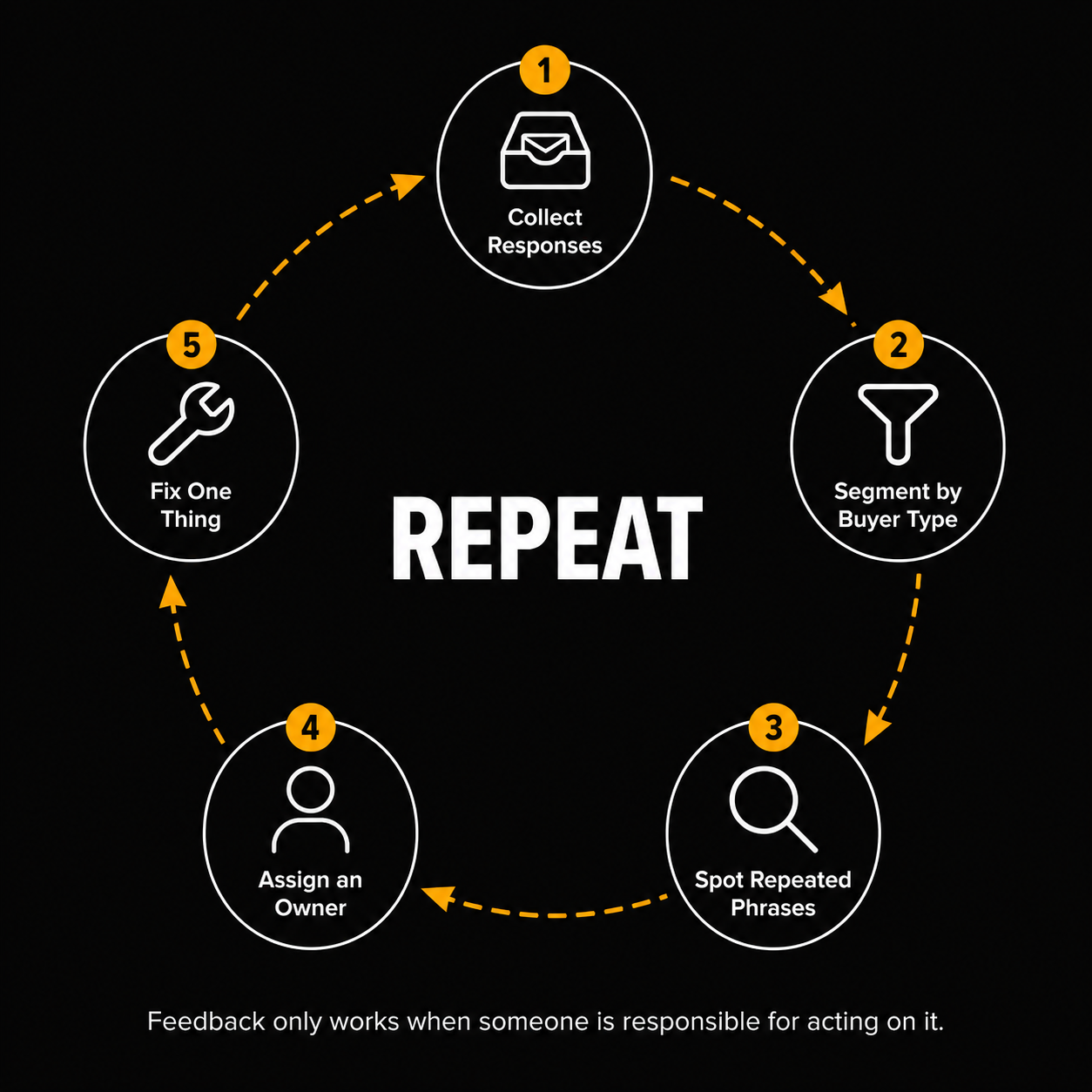

Act on what you find. Set a weekly rhythm. Look for repeated phrases, new friction, and shifts in sentiment. Feedback only matters when someone is responsible for doing something with it.

The Simplest Way to Start

If you're not sure where to begin, use this:

Q1: How was your purchase experience today?

Q2: What made you decide to buy from us?

That's it. You get a satisfaction signal and a buying trigger in two questions. You can always build from there once you see what your customers are actually saying.

What to Do With the Answers

Don't just watch the score. Read the language your customers use.

If five people say shipping felt unclear, fix the shipping copy. If ten people say reviews made them trust you, make reviews more visible. If first-time buyers keep asking the same question after purchase, answer it before purchase.

That's the real job of post-purchase feedback. Not reporting. Improvement.

The Part Most Teams Get Wrong

Most post-purchase surveys fail not because of bad questions, but because of what happens after collection. Teams gather feedback, skim the dashboard once, and move on.

The fix is not a better survey. It's a better habit. Someone needs to own the responses, spot the patterns, and close the loop.

When that happens, even two simple questions can change how you write product pages, how you handle checkout, and how you keep customers coming back.

Start Collecting Feedback That Actually Means Something

If this got you thinking about how you're currently listening to customers, that's a good sign.

At Elvan, we help teams run short, focused surveys across email, web, and other channels so feedback reaches you while it's still fresh and useful. NPS, CSAT, post-purchase, CES, and more, set up in minutes without needing a developer.

If you want to stop guessing why customers leave and start hearing it directly, give Elvan a try. The first survey takes less time to build than it took to read this article.